Traded Value

Traded ValueThe author is amazing, this is a very high-level analysis of optical modules. The next Lumentum will be born from this table!

🔥The real bottleneck of AI is shifting from computing power to "light": Why photonic technology may determine the next stage's winners

The explosion of generative AI has brought a previously overlooked issue to the forefront—data transmission.

When training clusters scale from thousands of GPUs to tens of thousands, or even hundreds of thousands, and when model sizes surpass the 100T parameter level, the improvement in computing power is no longer the only variable.

What's truly starting to hold things back is the network.

In some unoptimized deployments, GPUs spend 30%–50% of their time in a "waiting for data" state.

What does this mean?

It's not a lack of computing power, but that the computing power "can't be utilized effectively."

This is why merely focusing on the GPUs themselves can no longer explain the real bottleneck in AI infrastructure.

Copper cables are still effective over short distances, but problems are rapidly emerging:

Limited bandwidth improvement

Excessively short distances

Sharp increase in power consumption and heat generation

As speeds continue to push upwards, this path is beginning to approach its physical limits.

Thus, a new critical layer is emerging—optical interconnects and silicon photonics technology.

It solves not "being a bit faster," but "whether we can continue to scale."

Higher bandwidth

Lower energy per bit

Stronger scalability

As AI systems shift from "single-machine performance" to "system-level collaboration," optical connections are moving from an option to a necessity.

A more critical variable lies in capital expenditure.

AI-related CapEx is projected to reach $527 billion in 2026.

This money won't just flow to GPUs.

It must also flow to:

Connectivity

Transmission

Packaging

Testing

Otherwise, the entire system cannot operate.

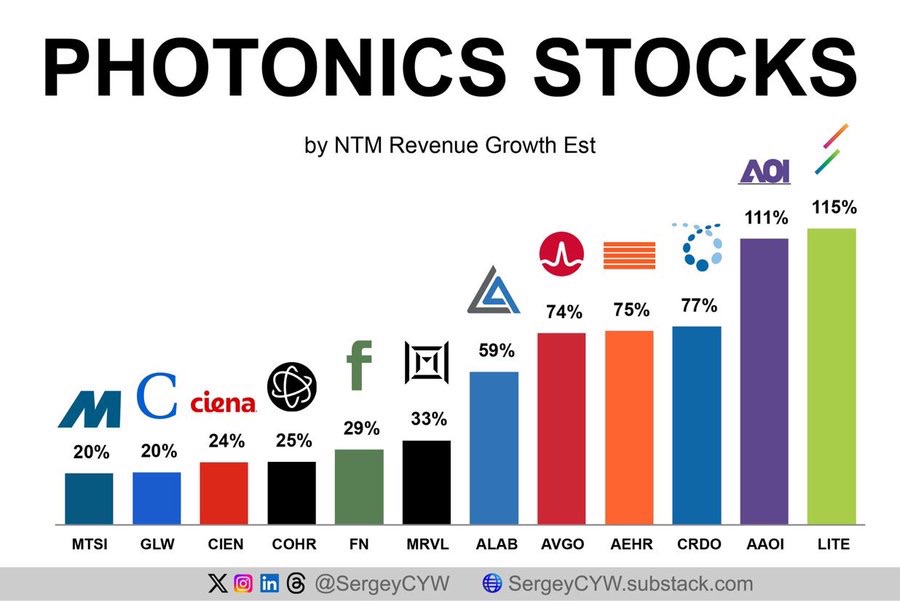

This is also why photonics-related companies are starting to see significant upward revisions in growth expectations:

$Macom Tech(MTSI.US)

$Corning(GLW.US)

$Ciena(CIEN.US)

$Coherent Corp.(COHR.US)

$Fabrinet(FN.US)

$Marvell Tech(MRVL.US)

$Astera Labs(ALAB.US)

$Aehr Test(AEHR.US)

$Credo Tech(CRDO.US)

$Applied Optoelectronics(AAOI.US)

$Lumentum(LITE.US)

The position these companies occupy is essentially the layer that "truly connects the computing power."

Yet the market's current pricing still focuses more on "the computing power itself."

The problem is:

If GPUs continue to expand, but the network can't keep up,

then the bottleneck will naturally shift.

Whoever constrains performance will have pricing power.

This is very similar to the early days of cloud computing:

First it was servers → then storage → then the network

Now, AI is repeating this path.

As copper cables gradually approach their physical boundaries, optical interconnects are beginning to become the "must-have" infrastructure layer.

This is no longer a question of technological choice, but of whether the system can scale.

In other words:

What may limit AI's economic viability in the future might no longer be computing power costs, but data transmission efficiency.

While the market is still discussing "who has the most GPUs,"

The real question has already become:

Who can make these GPUs work together efficiently?

If this judgment holds true, then the pricing power in the next stage,

will likely lie not in computing power, but in connectivity.

Only one question remains:

When the bottleneck shifts from GPUs to the network, will you continue to bet on the most obvious winner, or start to re-evaluate the truly scarce layer in the entire chain?

The copyright of this article belongs to the original author/organization.

The views expressed herein are solely those of the author and do not reflect the stance of the platform. The content is intended for investment reference purposes only and shall not be considered as investment advice. Please contact us if you have any questions or suggestions regarding the content services provided by the platform.