Cursor's self-developed new model surpasses Opus 4.6, featuring "price at 10% off," netizens mockingly call it "Kimi 2.5 shell," certified by Musk

Cursor 发布新模型 Composer 2,宣称性能超 Opus 4.6 且价格大降,却迅速被曝底层基座实为月之暗面 Kimi K2.5,引发马斯克及网友 “套壳” 群嘲。Cursor 创始人随后致歉承认未标明基座,但强调进行了大量强化学习;月之暗面则回应确认系合规授权合作并表祝贺。

AI 编程工具 Cursor 高调发布自研模型 Composer 2,宣称性能超越 Claude Opus 4.6 且价格大幅压低,却在不到 3 小时内遭开发者揭穿——其底层基座正是中国月之暗面的开源模型 Kimi K2.5。

这场"自研"风波迅速席卷 AI 社区,马斯克亲自下场认证,最终以 Cursor 联合创始人公开致歉、Kimi 官方发文祝贺收场。

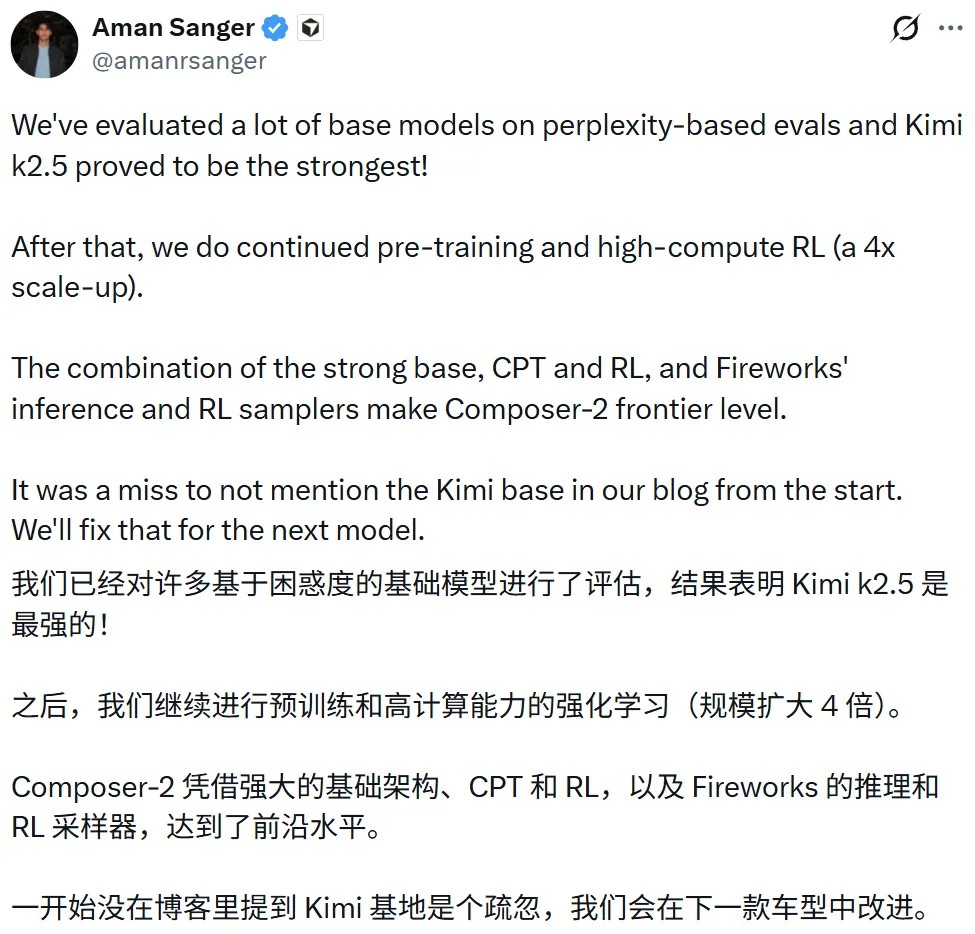

3 月 21 日,据硬 AI 消息,Cursor 联合创始人 Aman Sanger 在事件发酵后发文承认,"没有在博客中从一开始就提及 Kimi 基础模型是我们的疏漏,会在下一个模型中修正这一点。"

月之暗面官方账号随即回应:"恭喜 Cursor 推出 Composer 2,很骄傲看到 Kimi K2.5 成为基础模型,这就是我们喜欢的开源生态。"月之暗面同时澄清,Cursor 系通过 Fireworks AI 托管的强化学习与推理平台访问 Kimi K2.5,属于授权商业合作。

性能超越 Opus 4.6,价格"脚踝斩"

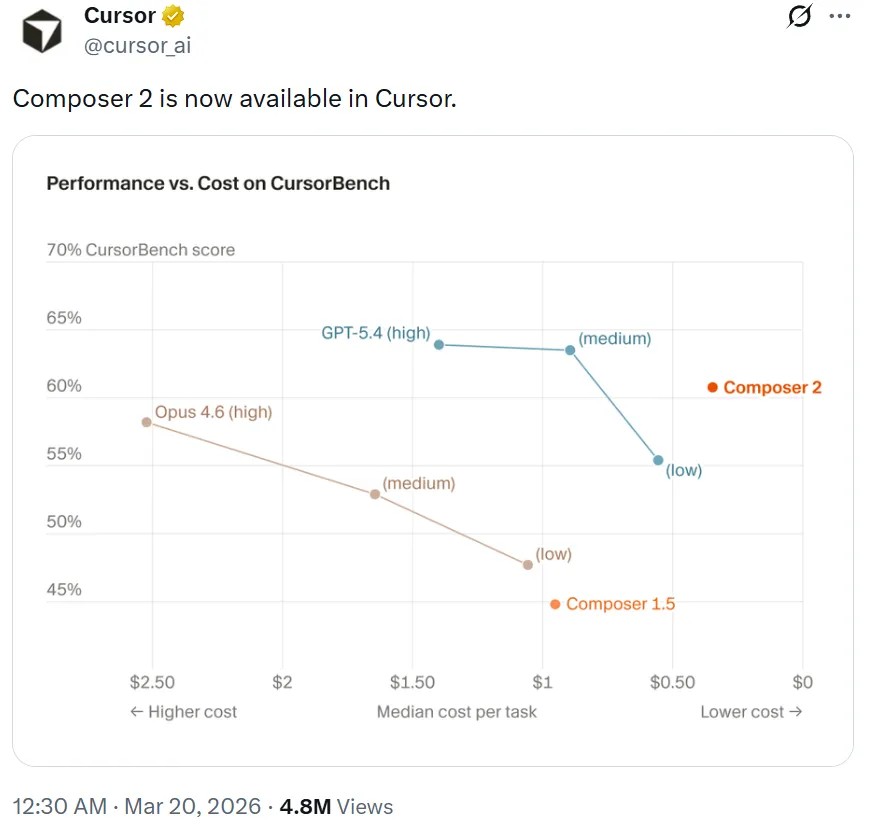

Cursor 本周五正式上线 Composer 2,并在发布博客中宣称,该模型在其衡量的所有基准测试上均取得大幅提升,包括 Terminal-Bench 2.0 和 SWE-bench Multilingual。

在衡量智能体终端操作能力的 Terminal-Bench 2.0 上,Composer 2 的表现位于 GPT-5.4 和 Claude Opus 4.6 之间,在 CursorBench 基准上的性价比表现则明显超过上述两款模型。

定价是 Cursor 此次发布的核心卖点。标准版 Composer 2 的输入价格为 0.5 美元/百万 tokens、输出价格为 2.5 美元/百万 tokens,与 Claude Opus 4.6 相比几乎是"脚踝斩"级别的降幅。

Cursor 同步推出速度更快的变体 Composer 2 Fast,定价为每百万输入 tokens 1.5 美元、每百万输出 tokens 7.5 美元,在延续价格优势的同时主打响应速度。

Cursor 将这一性价比突破归因于一种新的强化学习方法,并强调这是"实实在在训练出来的能力,而非推理技巧"。

发布不到 3 小时,底层基座遭曝光

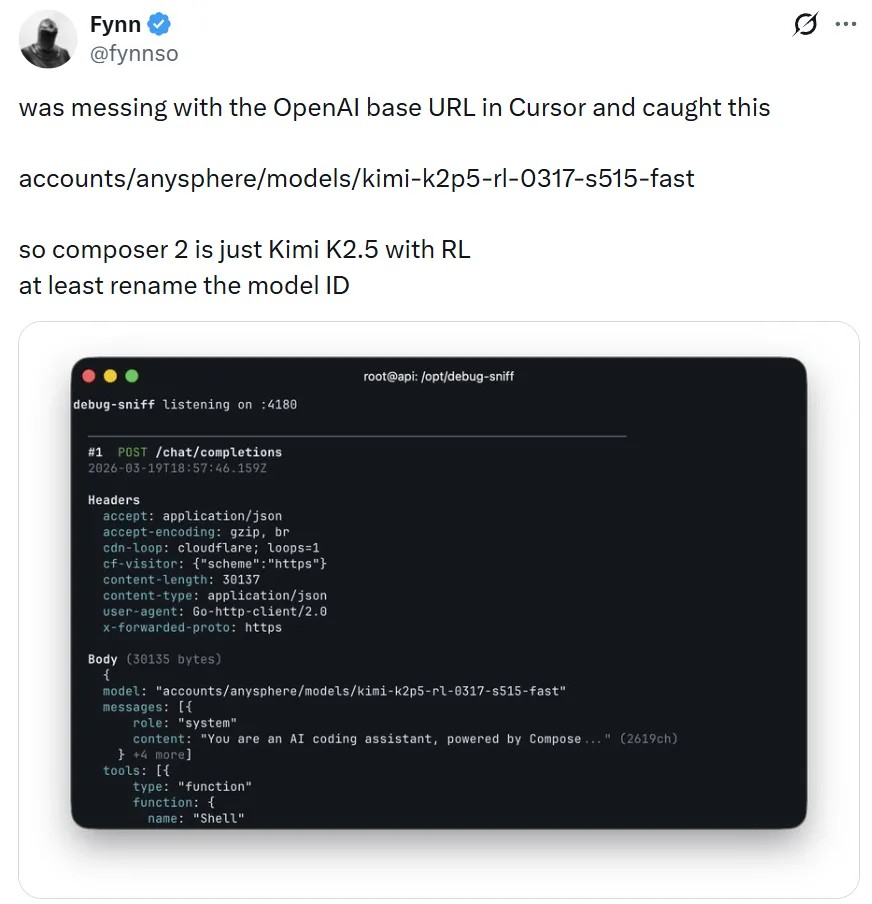

然而,Composer 2 的高光时刻极为短暂。发布后不到 3 小时,X 平台用户 @fynnso 发现该模型的模型 ID 显示为 kimi-k2p5-rl-0317-s515-fast,随即得出结论:"Composer 2 其实就是经过强化学习的 Kimi K2.5。"

这一发现迅速在 X 和 Hacker News 等技术社区扩散,梗图与讨论齐飞。马斯克亦在 @fynnso 的帖子下直接回复"Yeah, it's Kimi 2.5",进一步放大了话题热度。

Reddit 社区 r/singularity 的讨论同样热烈。有用户评论称:

"最搞笑的是,大家还在夸 Composer 2 是巨大飞跃,结果全程用的是别人的模型。这让人不禁想,有多少所谓'专有模型'其实只是套了个 logo 的开源微调版。"

也有观点认为,Cursor 的真正护城河在于其从大量开发者使用中积累的任务解决数据,而非预训练本身,"每个投资人都知道他们没有在做自己的基础模型,他们本应从一开始就坦诚说明。"

Cursor 致歉,Kimi 确认授权合作

面对舆论压力,Cursor 团队做出正面回应。

Aman Sanger 公开确认,团队对多个基座模型进行了困惑度评测,Kimi K2.5"证明是最强的",随后在此基础上叠加了持续预训练和 4 倍规模的高算力强化学习,并通过 Fireworks AI 的推理与 RL 采样器进行部署。

Cursor 开发者教育副总裁 Lee Robinson 补充披露了更多技术细节:最终模型中来自基座的算力约占 1/4,其余 3/4 来自 Cursor 自身训练。

Robinson 同时表示,虽然 Composer 2 基于开源模型开发,但未来团队也会进行完整的预训练。

月之暗面官方随后明确表态,强调此次合作符合许可证要求,属于授权商业合作,并对 Cursor 发布 Composer 2 表示祝贺。

至此,这场争议的法律与授权层面基本厘清,但 Cursor 在发布时刻意回避底座信息的做法,在开发者社区仍留有余波。

"做笔记"强化学习:Cursor 的技术自述

尽管底座来源引发争议,Cursor 在技术层面的工作仍有其独立价值。

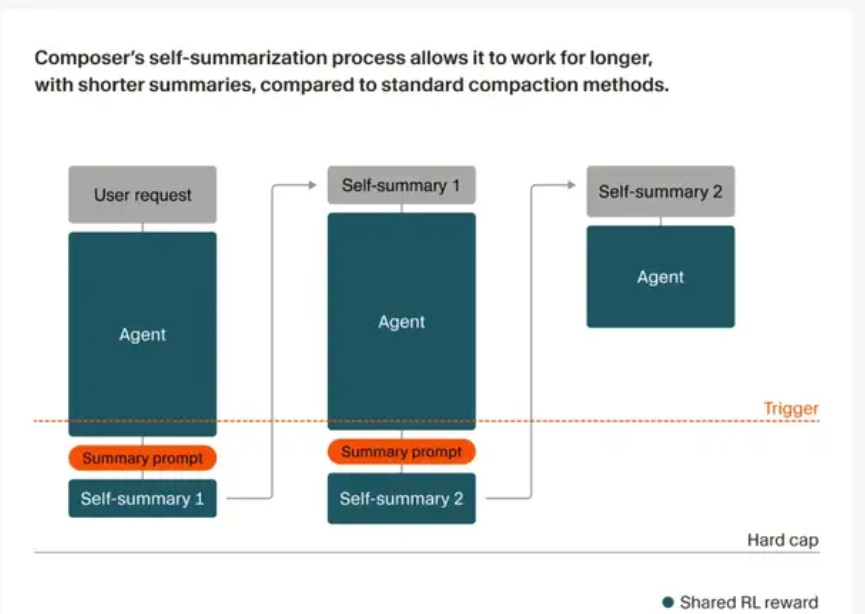

Cursor 在博客中详细介绍了其核心方法——一种名为"自我总结(self-summary)"的强化学习机制,旨在解决 AI 编程助手在处理超长复杂任务时因上下文窗口有限而"跑偏"的痛点。

具体而言,模型在执行任务过程中,会在达到固定 token 长度触发点时主动暂停,生成一段"阶段总结",随后基于压缩后的上下文继续推进任务。这种总结能力被纳入强化学习的奖励机制:总结质量越高、后续任务成功率越高,模型获得的奖励越大,反之则受到惩罚。

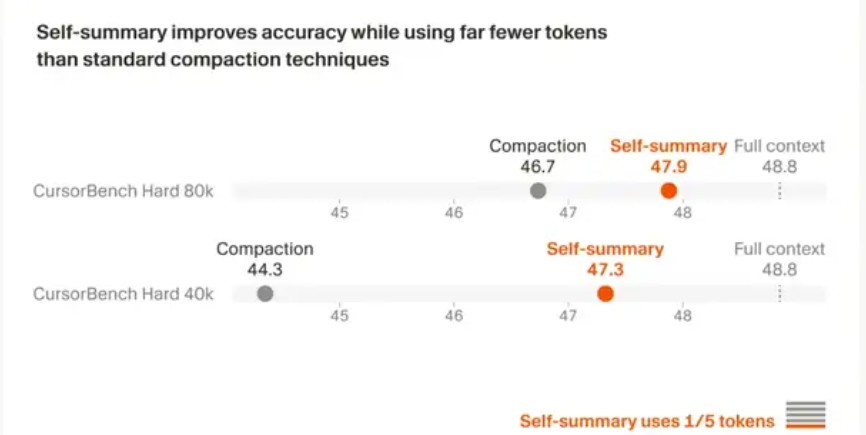

Cursor 披露的内部测试数据显示,与传统摘要方法相比,该方法的 token 用量仅为传统方法的 1/5,而压缩带来的错误减少约 50%。

Cursor 以"将 Doom 游戏跑在 MIPS 架构上"这一高难度任务为例,Composer 在经过 170 轮交互后找到精确解法,并将 10 万余 tokens 的上下文压缩至约 1000 个。

开源生态与透明度之争

此次事件的更深层讨论,指向 AI 应用层与开源生态之间的互信问题。

Hugging Face 联合创始人兼 CEO Clement Delangue 从中看到了开源的价值,表示中国的开源模型如今已成为塑造全球 AI 技术栈的最大力量。

竞争对手 Windsurf 则迅速抓住时机,宣布未来一周将对用户免费开放 Kimi K2.5,借势吸引 Cursor 用户。

分析指出,对于 Cursor 而言,这场风波在融资关键节点上带来了额外的舆论压力。据报道,Cursor 目前正以 500 亿美元估值进行新一轮融资。

Cursor CEO Aman Sanger 此前表示,Cursor 是"既不是纯粹的应用程序开发商,也不是模型提供商"的新型公司。

这次事件表明,当开源底座性能逐渐逼近顶尖闭源模型,下游应用厂商如何在商业包装与技术透明度之间取得平衡,将成为行业无法回避的议题。