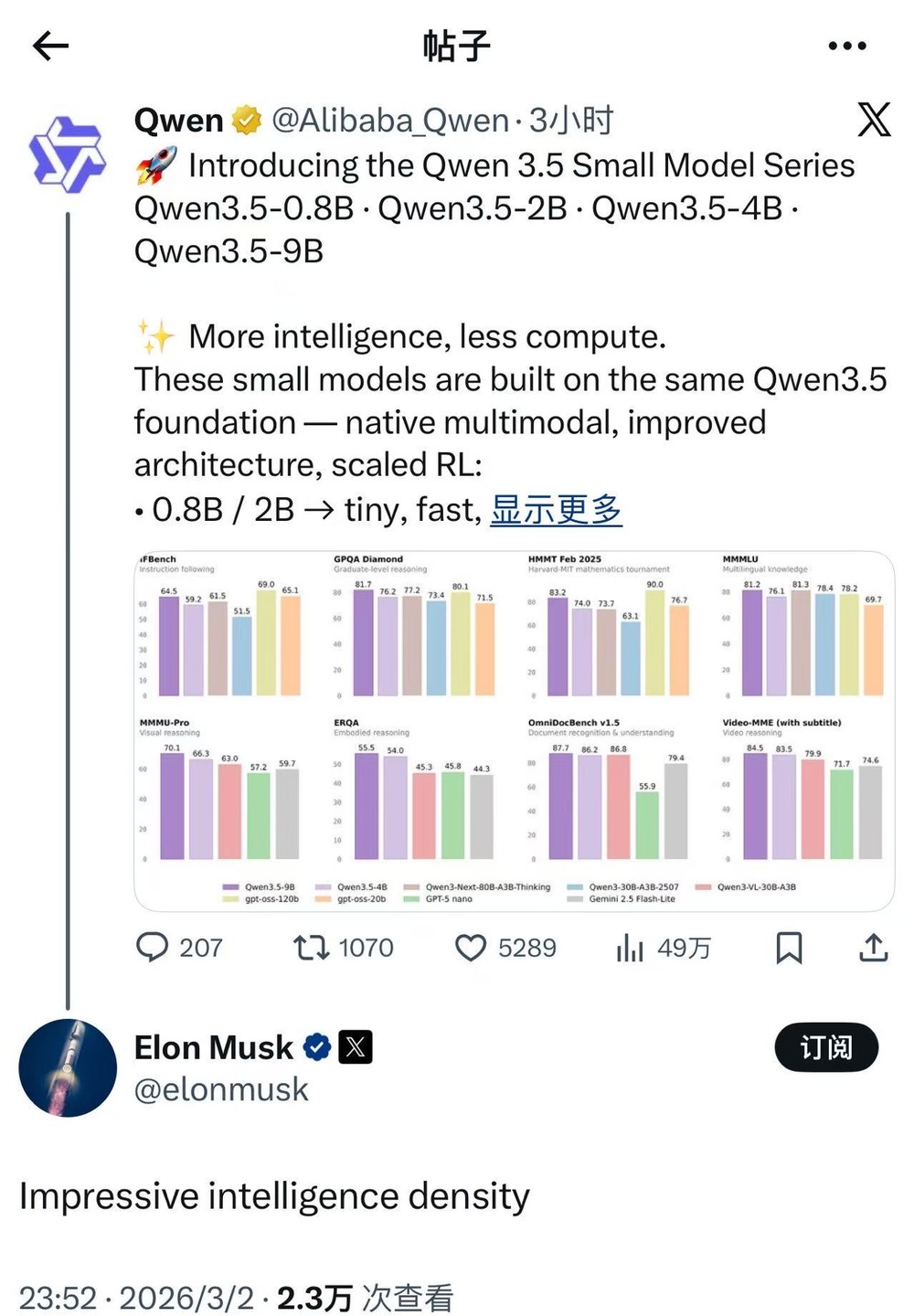

Elon Musk praises Qianwen's new model intelligent density

Alibaba's Qwen has open-sourced the Qwen 3.5 small-size model series, and Musk stated on X that it possesses "impressive intelligence density." Lin Junyang, a member of the Qwen team, expressed gratitude for this. The performance of this model series surpasses that of the hundred billion parameter scale gpt-oss-120B, marking a shift in the large model industry from purely "brute force" to the practical deployment of "lightweight intelligent agents."

Alibaba Qwen today open-sourced the Qwen3.5 small model series (0.8B, 2B, 4B, 9B).

Subsequently, Musk commented on X that this batch of models possesses "impressive intelligence density."

Lin Junyang, a core member of the Qwen team, also retweeted and replied, "thx elon!"

What did Qwen release this time that made Musk specifically mention "intelligence density"?

The reason for being rated as "high intelligence density" lies in its superior performance: official test sets show that the compact 9B version performs comparably to or even surpasses the gpt-oss-120B model with hundreds of billions of parameters in multiple tests such as IFBench and GPQA Diamond.

As of now, all 8 models in the Qwen3.5 family (including Dense models and MoE mixed expert models) have been open-sourced under the Apache 2.0 license. The direction of the large model industry is clearly shifting from simply "brute force" to practical deployment of "edge-side implementation" and "lightweight intelligent agents." (Hard AI)